the racism instinct

June 16th, 2012(read the book free online – get a copy for your Kindle – read the Reddit AMA)

Love at first sight. A feeling of fate, destiny, of Meant to Be. We’ve all been there, enthralled by a sense that in someone else we’ve found a missing piece ofourselves. From the time we’re kids, we’ re told that this is the most wonderful compulsion in the world, that we should all be so lucky to have love sweep into our lives with and wash away all of our fears and hesitations with its tempestuous embrace.

And yet, like all things, love too has a hidden side that we’d rather tell ourselves isn’t really there at all.

10,000 years ago something funny began to happen within the human genome. We didn’t know it at the time, but minute changes that would haunt every generation to come were slowly and imperceptibly unfolding inside each and every one of our ancestors.

Almost like flipping a switch, what had once been a steady rate of mutation suddenly increased, and “the pace of change accelerated to 10 to 100 times the average long-term rate.” These changes were driven at least in part by the development of agriculture, the domestication of both plants and animals. Some human guts retained the ability to digest lactose as adults, others adapted to deal with a grain-heavy diet. Although agriculture wasn’t the only factor and there were certainly other forces at play, we do know, without question, that 10,000 years ago mutations in our genome began to accumulate at a pace never before seen in human prehistory.

Life as we’d known it would never be the same.

The proliferation of agriculture, both domesticating animals and growing crops, brought massive changes to early human societies. One impact was that that populations suddenly became much more dense, another was that humans began to interact much more frequently and closely with domesticated animals. These two factors – population density and our cohabitation with the creatures that would become our beef, pork, and poultry – allowed contagious diseases to kick a firm foothold into human societies.

“Diseases such as malaria, smallpox and tuberculosis, among others, became more virulent,” and we’ve since traced the flu back to ducks, pigs, and geese as its original hosts. Additionally, barnyard animals like cats, rats, horses, cattle, sheep, goats, dogs, and birds have all played roles in transmitting – in no particular order – anthrax, rabies, tapeworms, plague, chlamydia, and salmonella.

Empowered by their new mastery of their food supply our ancestors once again made their way out of Africa and into the surrounding regions, and this time they were able to establish permanent settlements well north of their previous wanderings. Along the way, the most noticeable change of all occurred. Somewhere along the Black Seas coast, a baby popped out and looked up at its parents with blue eyes. This novel mutation along the OCA2 gene has since propagated itself across human society, hand in hand with another subtler series of mutations.

As humans made their way out of Africa and into Eurasian and other norther latitudes, their pigmentation changed markedly and we began to differentiate into a racial spectrum:

This reduction in pigmentation had an obvious biological trigger: we’ve known for awhile that the fairer your skin, the more vitamin D you’ll produce when it’s hit with sunlight.

In the past few years new information has emerged, in turns out that “vitamin D is crucial to activating our immune defenses and that without sufficient intake of the vitamin, the killer cells of the immune system – T cells – will not be able to react to and fight off serious infections in the body.” Without enough vitamin D in our bodies, our immune systems simply cannot function. Labeling it a “vitamin” is actually a bit of a misnomer, it’s actually a hormone that functions as:

“…a potent antibiotic. Instead of directly killing bacteria and viruses, [vitamin D] increases the body’s production of a remarkable class of proteins, called antimicrobial peptides. The 200 known antimicrobial peptides directly and rapidly destroy the cell walls of bacteria, fungi, and viruses, including the influenza virus, and play a key role in keeping the lungs free of infection.”

Additionally it turns out vitamin D “seems important for preventing and even treating many types of cancer… Four separate studies found it helped protect against lymphoma and cancers of the prostate, [colon], lung and, ironically, the skin.”

And vitamin D isn’t just another hormone, in fact it’s tied in to our very humanity. Or at least our very simian-anity, as vitamin D’s vital role in primate immunology emerged within our genome over 60 million years ago, and carries a functionality that is unique to us and our furrier brethren – no other animal on earth except primates shares the DNA which ties vitamin D to antibacterial peptide synthesis. Besides just helping fight off infection, it prevents the autoimmune overreaction of the body fighting itself, and so it “may enable suppression of inflammation while potentiating innate immunity, thus maximizing the overall immune response to a pathogen and minimizing damage to the host.”

The conventionally accepted theory has been that children with lighter skin tended to survive more in northern latitudes because children with darker skin fell victim to rickets and other prenatal conditions that occur when pregnant mothers don’t get enough vitamin D. But both preventing and treating rickets requires only 400 IU’s of vitamin D a day.

And so when you consider that “a fair skinned person, sitting on a New York beach in June for about 10-15 minutes… is producing the equivalent of 15,000-20,000 IU’s of Vitamin D” and that “African-Americans with very dark skin have an SPF of 15, and, thus, their ability to make vitamin D in their skin is reduced by as much as 99%” it becomes readily apparent that racial differentiation emerged to drastically ramp up vitamin D production to fight disease, not merely to prevent a mild deficiency.

Lighter skin didn’t develop just to prevent Rickets, but was in fact a vital adaptation to fight back against the growing array of diseases that were beginning to proliferate throughout early crowded agriculture communities. And it wasn’t that agriculture removed vitamin D from the diets of early societies, because the amount of vitamin D in food is negligible – especially compared to the thousands and thousands of IU’s created by sunlight in fair-skinned individuals. Unless you want to argue that giant 400-pound cod were roaming the African veld, any argument that centers around diet is spurious at best. Indeed, “it is almost impossible to get your vitamin D needs met by food alone.”

If anything agriculture may have actually reduced the vitamin D levels of early societies, since a growing body of evidence is pointing towards the idea that much of the cereal that was grown would’ve been used to brew beer instead of bake bread: “the first thing [early societies] did with grain… was make it into beer.”

And even in the New World, analysis of the atomic variations of human skeletal remains indicate that Native Americans were probably brewing their maize into wine rather than baking with it. Much of the early pottery – now broken bits of jugs and amphora – may have in fact been used to store alcoholic beverages. Primitive brewing likely just started off as trying to mimic natural processes that created ethanol such as fermenting fruits and rainwater pooling with honey that animals were observed getting crunk off of, comparatively simple to imitate or invent by accident compared to baking.

But why beer instead of bread? It’s all about the calories, and public health.

Drinking weak alcoholic beverages would’ve actually been safer than drinking water since beer is generally sterile and even has antiseptic properties, and it’s certainly more healthful since beer contains a wide range of important micronutriets such as folic acid, silicon, and B vitamins. Brewing cereal into beer instead of baking it into bread would’ve been a primitive form of preservation as well, since without refrigeration bread starts to spoil inside a week, whereas the alcohol and yeasts in beer act as preservatives and greatly extend its shelf-life.

Social interaction is what gave birth to our first primate civilizations, so the importance of feasts and celebrations in early societies would’ve made beer a vital social lubricant – or to flip the metaphor on its head, the very glue that first began to hold civilization together. And so, since alcohol has the unique effect of blocking the synthesis of vitamin D, agriculture and beer consumption would’ve contributed to deficiencies and further increased the need to get more exposure to sunlight.

Native peoples at northern latitudes whose skin didn’t lighten as much stayed darker because agriculture never caused their societies to become crowded or involved with animals enough for disease to become a significant pressure. After all, it was “the strength of selection as people became agriculturalists—a major ecological change—and a vast increase in the number of favorable mutations as agriculture led to increased population size” which, in turn, led to the mutations which lightened northern agriculturalists’ skin.

The racial groups we have now emerged as as a direct consequence of migrating into more northern latitudes, lightened skin gave dense agricultural communities the strengthened immune system they needed to fight off the lethal pathogens and parasites that became an increasing threat to crowded early human societies. And as Guns, Germs, and Steel outlined, as different racial groups domesticated and interacted with different animals, they developed communal immunities to discrete sets of diseases:

“Disease-causing pathogens—viruses, bacteria and protists—have geographies, both in terms of where they can be found and how common they are within those regions. The consequent map of malaise and death affects many aspects of the human story”

Racial groups in Eurasian, Africa, the Americas, and Southeast Asia were each exposed to unique biological threats, depending on the region they inhabited and the animals they domesticated. Although there was some overlap, their exposures were still discrete enough so that when one community did come into contact with the other, widespread pandemics of the strangers’ diseases often occured.

Living shoulder-to-shoulder with each other and with their newly domesticated livestock, disease became a much bigger threat than it’d ever been to early hunter-gatherer communities. This immunological legacy is still with us, as different racial groups express different rates of a vast array of common diseases to this day.

So why is any of this a bad thing?

Within the past few years everyone from Cosmo to Nature has reported on the results of a surprising study: women can, it would seem, smell who they’re attracted to. Bags containing t-shirts that various men had worn while exercising served as sweaty glass slippers, women were asked to rate the attractiveness of the scent contained in each bag – every white t-shirt was exactly the same except for the odor of the man who’d worn it. Women rated each bag and went on their way, never to learn anything more about the prospective mates than what their used laundry smelled like. And when researchers went to work trying to figure out why women made the choices they did, a surprising and consistent link was found: women are attracted to men whose major-histocompatibility complex (MHC) genes most complimented theirs:

Women’s preference for MHC-distinct mates makes perfect sense from a biological point of view. Ever since ancestral times, partners whose immune systems are different have produced offspring who are more disease-resistant. With more immune genes expressed, kids are buffered against a wider variety of pathogens and toxins.

MHC genes are the gatekeepers of our immune systems, they determine white blood cell function and decide whether or not organs will be accepted for donation – regulating whether or not a new host will accept a donor organ as its own or attack it as an outside contagion. The better suited your MHC genes are to fighting the pathogens present in your environment, the healthier you’ll be.

And not only healthier, but more intelligent too. A study of IQ scores and infectious disease found that both internationally between nations and nationally between states, IQ levels correlate more closely with the rate of infectious disease than any other factor. Given how biologically taxing it is for children to fight off disease, and the fact that our brains suck up 90% of our energy as newborns and one-quarter of our energy as adults, it stands to reason that healthier societies end up more intelligent on the whole and that instincts which select for healthy progeny would emerge.

So when it comes to picking a mate who will pass half of their MHC genes onto your child and largely decide the suitably of their immune system to the environment, the trick is determining exactly what “compliment” means, as researchers found that “women are not attracted to the smell of men with whom they had no MHC genes in common. ‘This might be a case where you’re protecting yourself against a mate who’s too similar or too dissimilar, but there’s a middle range where you’re okay.'”

Who would have MHC genes that would compliment yours? Members of a population that underwent similar immunological pressures as your ancestors – but not exactly the same. Not a family member or close relative, but someone whose ancestors adapted to similar pressures created by the diseases that became so prevalent in crowded communities that were in close contact with each other and with the livestock they raised.

Pressures which began roughly 10,000 years ago, which is when our first blue-eyed ancestor was born and when racial differentiation began to emerge. And so it would make a certain amount of biological sense to be biologically compelled to make babies with members of our own race, to ensure that our kids have an immune system that’s suited to the immunological pressures they would have encountered:

“Body odor is an external manifestation of the immune system, and the smells we think are attractive come from the people who are most genetically compatible with us.” Much of what we vaguely call “sexual chemistry,” is likely a direct result of this scent-based compatibility.

And this compatibility isn’t outwardly expressed only in scent, we also wear our MHC genes somewhere even more obvious than our sleeves: directly on our faces. Multiple studies studies have shown links between the range of human MHC genes and facial appearance, as well as MHC genes and perceived attractiveness. It would follow that simply by looking at a member of another race, we would immediately know on an instinctual level that their MHC genes would be wildly dissimilar to our own.

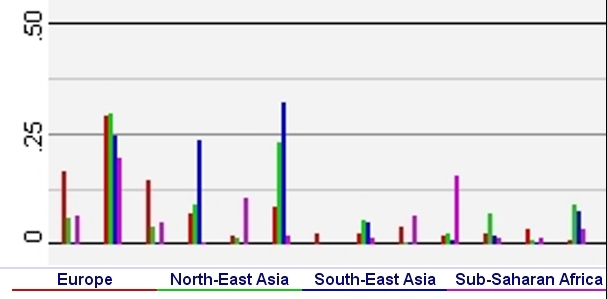

Any examination of the National Center for Biotechnology Information’s online database reveals these disparities throughout our MHC genes. As this probabilistic selection of some of the HLA-A alleles illustrates, although some alleles occur at similar rates, the odds that many of them will occur varies widely across the MHC region of our genomes:

Each grouping represents a different HLA-A allele: 1, 2, 3, 11, 23, 24, 25, 26, 29, 30, 31, 32, 33

All of this makes a tremendous amount of sense, an instinct to create offspring with someone who is going to provide your child with the best odds of having a robust immune system would have been vital for any community that was under heavy pressure from disease. Although it’s important to keep in mind that this instinct would have begun to emerge 10,000 years ago, long before any sort of antibiotics or sterile surgery. Medical science develops incredibly quickly, even just 150 years ago doctors didn’t even realize they should wash their hands before jamming them up inside someone. Modern medicine has arguably rendered this instinct obsolete in practical terms, and yet it’s still wired into us as part of our primitive heritage.

But just because something’s an instinct doesn’t by any means make it Right. We also have instincts for violence and promiscuity that would cause our societies to implode if they weren’t regulated by human reason and rational decision-making. Human societies are epic practices in not embracing our basest instincts, individuals are encouraged to do their best not to act like animals. And yet all that said, it doesn’t make the instinct to breed with someone who’s going to provide your brood with the best suited immune system for the environment any less real, or any less a part of who we are.

So human females indicate a preference for mating with someone who shared a similar ancestry as themselves, but would it necessarily mean a distaste for outsiders? At least for ovulating women, yes it would.

Turns out women who are fertile exhibit a strong implicit bias against men from other races. While ovulating women were more attracted to men of their own race who were perceived as physically imposing, the opposite was true if the man was a member of a different racial group. So not only are we drawn to members who share a similar ancestry as our own, but human females are unconsciously repelled by members of other races when they’re fertile.

An increasingly common refrain in America is that it’s very easy to pretend that racism is dead and gone, at least until a black guy knocks on your door intending to take your daughter out on a date. This continued prejudice was dubiously enshrined in our laws against miscegenation, which were the last racist laws to leave our books and weren’t ruled unconstitutional until a generation after Brown vs. Board, at which point only 20% of Americans were in favor of legalizing interracial marriages. And yet despite that ruling, Alabama had a law against interracial marriage on the books until 2000, and even then 40% of Alabamians voted in favor of it.

African-Americans may be especially prone to be discriminated against, as recent analysis of the human genome has revealed that all non-African humans posses some Neanderthal DNA. And this DNA isn’t just random snippets, it forms an MHC allele that’s absent in Africans and would’ve boosted non-African immune systems as they traveled away from the continent.

Although women clearly have a much stronger sense of smell and are much more attuned to finding partners with compatible MHC genes, men are still aware of this interaction and their opposition to members of an outside race mate-pairing with sisters and daughters who share their genetic code makes evolutionary sense: they want their communal family gene pool to stay immunologically robust. Additionally, the apparent instinct of being able to smell and see a stranger’s MHC genes may easily have caused an instinctual xenophobia to develop, since someone with vastly different MHC genes would often be harboring pathogens that your immune system would be utterly defenseless against.

Because it’s not just fertile females who exhibit a prejudice against members of other races, recent research into implicit bias indicates that most white folks are unconsciously quite a bit racist. Research at Yale supports the idea that many whites are unconsciously biased, whether they admit it to themselves or not, and no matter how many black friends they have. The following experiments required a rather complicated set-up, but to get an idea of what your own implicit and unconscious feelings about race actually are, you can take a few minutes at: Harvard’s Implicit Association Racial Test.

In the first experiment, when white test subjects either read about or watched a video of a white guy calling a black man a “clumsy nigger” or saying “I hate when black people do that” after being accidentally bumped into, they frequently claimed they would have confronted the guy making the racist remark if they’d been there, and at least 75% of them said they’d rather work with the black guy in the scenario than the racist white guy.

But when subjects were actually in the room for the above event and the racial epithet was spewed in their presence, none of them actually spoke up and 71% of them said they’d rather work with the openly rather racist white guy than the black man. But it gets a lot worse, as demonstrated by one recent study conducted over the course of six-years at Stanford:

…that showed [white] study participants words like “ape” or “cat” (as a control) and then a video clip of a television show like “COPS” in which police are beating a man of unknown racial identity. Then, the researchers showed the participants a photo of either a black or white man, described him as a “loving family man” yet with a criminal history. They then asked participants to rate how justified they thought the beating was. Those who believed the suspect was black were more likely to say the beating was justified when they were primed with words like “ape.”

Leading researchers to the uncomfortable conclusion that “whites subconsciously associate blacks with apes and are more likely to condone violence against black criminal suspects as a result of their broader inability to accept blacks as ‘fully human.'” But all of these subjects were at least college-aged, perhaps nothing instinctual is going on and they’re just displayed culturally learned behaviors. Well, if that were the case – why are nine-month old babies racist too?

Turns out white nine-month old babies with little or no previous contact with African-Americans have trouble telling black faces apart and don’t register emotions on black faces as well as emotions on white faces:

“These results suggest that biases in face recognition and perception begin in preverbal infants, well before concepts about race are formed,” said study leader Lisa Scott in a statement. The shift in recognition ability was not cultural, rather a result of physical development.

Although, curiously, five-month old babies seem to process faces from either race the same way. But it’s important to note that babies don’t develop a fear of height or a fear of strangers until they’re seven-months old. Five-month old babies might seem to be processing “faces” of different races the same way, but without a fear of strangers it’s a bit hard to argue that their brains have developed to the point where they can understand the concept of personhood in the first place. And without any fear of height it would seem their brains haven’t yet reached the point where any fear instinct at all would have kicked in.

Plenty of research has been done into racism as a cultural reality, but very little if any at all has examined it as a naturally occurring instinct that’s inexorably rooted in our biology. And even if you haven’t been on X-Box Live or an internet messageboard recently, if you take a moment to look at the data it become readily apparent that American society is still unarguably organized largely along racial lines:

What if the policies that have created these abject discrepancies aren’t simply a result of learned cultural behavior, but are in fact deeply rooted inside of our genome? If an aversion against members of outside racial groups is fundamentally rooted in our biology, and not culture, the fundamental interaction of societies with mixed racial groups and the policies they enact should be very carefully reexamined.

In a perfect world our children would be judged by the content of their character, but if the color of their skin is linked to a subtle unconscious instinct for racism within each and every one of us, we aren’t going to get anywhere until we bring this viscous racial chimera out from its genomic cave and deal with it directly.

“Nothing in all the world is more dangerous than sincere ignorance and conscientious stupidity”

– Dr. Martin Luther King Jr.

Love at first sight. A feeling of fate, destiny, of Meant to Be. We‘ve all been there, enthralled by

a sense that in someone else we’ve found a missing piece ofourselves. From the time we‘re kids,

we‘ re told that this is the most wonderful compulsion in the world, that we should all be so lucky

to have love sweep into our lives with and wash away all of our fears and hesitations with its

tempestuous embrace.

And yet, like all things, love too has a hidden side that we‘d rather tell ourselves isn’t really there

at all.

Category: Africa, anthropology, biological racism, genetic compatibility, genetics, human genome, human migration, immunology, impact of agriculture, MHC genes, racial tension, racism, smell a mate, war on drugs | Tags: genetic compatibility, genetics, MHC genes, news Comment »